If someone asks me to check how Linux/UNIX system is performing now, the first thing I do is vmstat. A lot of people just check for CPU and memory utilization statistics in vmstat. But in reality, it gives more information than just CPU and memory information. In this posting, I am going to explain the detail of vmstat.

vmstat stands for virtual memory statistics; it collects and displays summary information about memory, processes, interrupts, paging, and block I/O information. By specifying the interval, it can be used to observe system activity interactively.

Most commonly people will use 2 numeric arguments in vmstat; the first is delay or sleep between updates and the second is how many updates you want to see before vmstat quits. Please note this is not the full syntax of vmstat and also it can vary between OSs. Please refer to your OS man page for more information.

To run vmstat with 7 updates, 10 seconds apart type

#vmstat 10 7

Please note, in some systems, reported metrics might be slightly different. The heading that I am writing now is reported in Oracle Linux (Unbreakable Oracle Linux)

Process Block: Provides details of the process which are waiting for something (it can be CPU, IO etc; can be potentially for any resource)

r --> Processes waiting for CPU. More the count we observe, more processes waiting to run. If we just observe a spike in the count, we shouldn’t treat them as bottlenecks. If the value is constantly high (most people treat 2 * CPU count as high), it hints that CPU is the bottleneck.

b --> Uninterruptible sleeping processes, also known as “blocked” processes. These processes are most likely waiting for I/O but could be for something else too

w --> number of processes that can be run but have been swapped out to the swap area. This parameter gives hint about the memory bottleneck. Please remember, only some system reports this count

Memory Block: Provides detailed memory statistics

Swpd --> Amount of virtual memory or swapped memory used

Free --> Amount of free physical memory (RAM)

Buff --> Amount of memory used as buffers. This memory is used to store file metadata such as i-nodes and data from raw block devices

Cache --> amount of physical memory used as a cache (Mostly cached file).

Note: Most of the systems report memory block value in KB. Remember I said most; not all. So check the man page.

Swap Block: Provided memory swap information

si --> Rate at which the memory is swapped back from the disk to the physical RAM

so --> Rate at which the memory is swapped out to the disk from physical RAM

Note: Most of the systems report swap block value in KB. Check man page

I/O Block: I/O related information

bi --> Rate at which the system reads the data from block devices (in blocks/sec)

bo --> Rate at which the system sends data to the block devices (in blocks/sec)

System Block: System information

in --> Number of interrupts received by the system per second

cs --> Rate of context switching in the process space (in number/sec)

CPU block: Most used CPU related information

Us --> Shows the percentage of CPU spent in user processes. Most of the user/application/database processes come under the user processes category

Sy --> Percentage of CPU used by system processes, such as all root/kernel processes

Id --> Percentage of free CPU

Wa --> Percentage spent in “waiting for I/O”

A lot of people have problems here. Some people say us + sy +id + wa=100 and some other says us + sy +id =100. I stick to second (I/O doesn’t consume CPU).

Interpretation with respect to performance:

The first line of the output is an average of all the metrics since the system was restarted. So, ignore that line since it does not show the current status. The other lines show the metrics in real-time.

Ideally, r/b/w values under procs block with close to 0 or 0 itself. If one or value counter values are constantly reporting high values, it means that system may not have sufficient CPU or Memory or I/O bandwidth.

If the value of swpd under swap is too high and it keeps changing, it means that system is running short of memory.

The data under “io” indicates the flow of data to and from the disks. This shows how much disk activity is going on, which does not necessarily indicate some problem(obviously data has to go to disk in order to be persistent). If we see some large number under “proc” and then “b” column (processes being blocked) and high I/O, the issue could be an I/O contention.

Rule of thumb in Performance

Adding more CPU, Memory, or I/O bandwidth to the system is not the solution to the problem always; this is just postponing of the problem to the future and it can blow anytime. The real solution is to tune the application(every component in the architecture) as far as possible. Adding hardware capacity or buying powerful hardware should be the last option.

As usual, comments are always welcome.

-Thiru

Thanks to Anonymous for pointing out the issue in bi/bo.

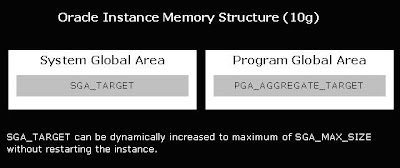

Before Oracle 9i, DBA has to maintain the whole bunch of memory parameters like DB_BLOCK_BUFFERS, SHARED_POOL_SIZE, set of PGA parameters *_AREA_SIZE,

Before Oracle 9i, DBA has to maintain the whole bunch of memory parameters like DB_BLOCK_BUFFERS, SHARED_POOL_SIZE, set of PGA parameters *_AREA_SIZE,